Data Pool Overview

The Purchase-to-Pay data model makes use of all the views available in the global schema of this data pool.

Using Imported Tables in the P2P ‘Local’ Jobs

Although this is not currently in use in the standard configuration of the P2P connector, it is actually possible to import tables from other data pools and ‘push’ them to the local pool of the P2P connector.

Example Use Case

If you are already extracting and using some of the P2P specific tables in the other data pools that you work with, for example EBAN, it is not required to re-extract them in the local job of the P2P connector. You could instead follow the example workflow below:

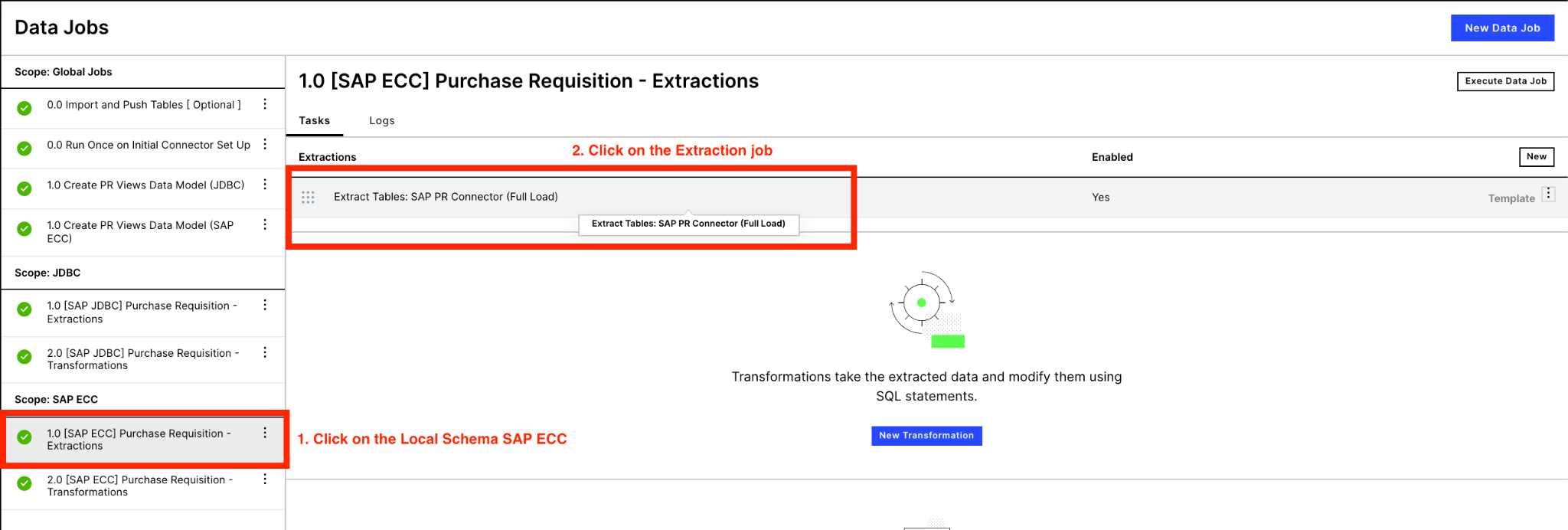

Click into into the relevant extraction and then disable the desired table:

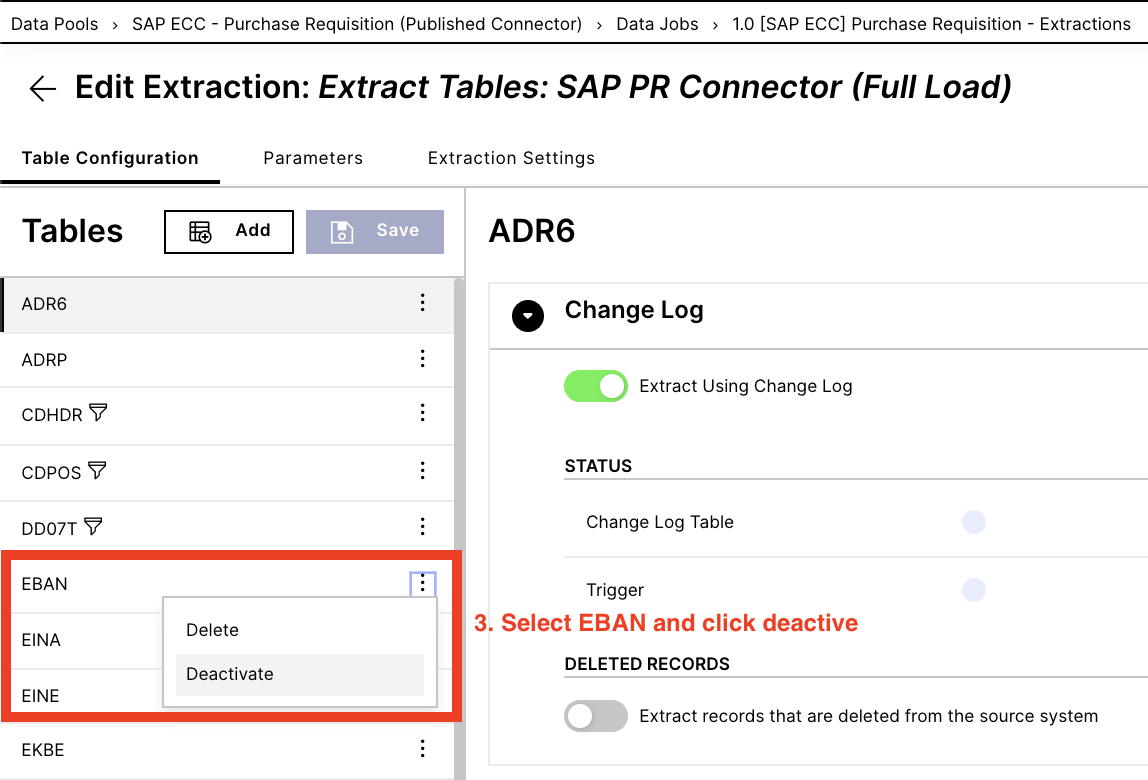

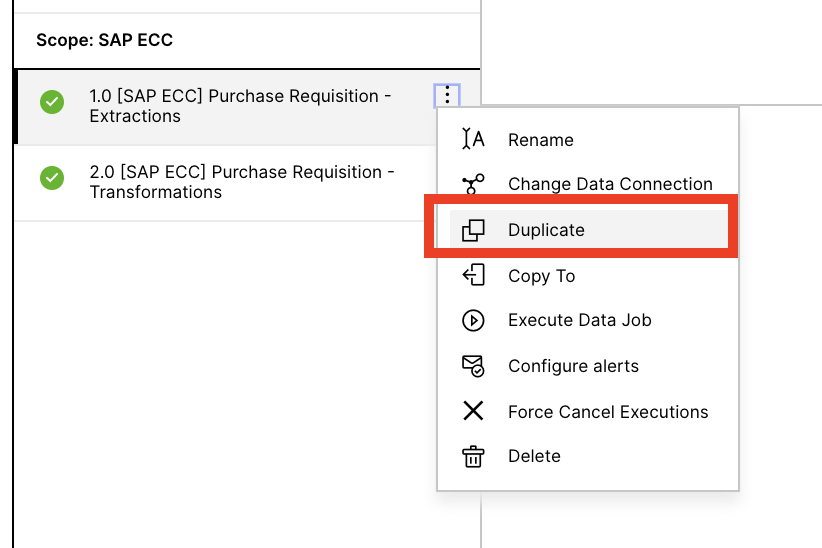

Navigate to the global job section and create a new global job named “0.1 Import and Push tables”. Then create a new transformation:

Inside of the transformation, the objective is to create a table in the local job based on the table from the source data pool. It is important that you update the data connection parameter in the FROM statement, to the parameter of your system.

DROP TABLE IF EXISTS <%=DATASOURCE:SAP_ECC%>."EBAN"; -- Target Local job and table you want to push to and create CREATE TABLE <%=DATASOURCE:SAP_ECC%>."P2P_EBAN" AS ( -- Target Local job and table you want to push to and create SELECT * FROM <%=DATASOURCE:SAP_ECC_-_PURCHASE_TO_PAY_SAP_ECC%>."EBAN" -- source table in source pool );Incorporate the new global job into your data job scheduling, such that these import and push transformations are executed prior to the Local P2P data job. This ensures that the EBAN table (from this example) is updated in the local job prior to the local transformations that depend on it are run.

Multiple SAP Source Systems

It is often the case that more than one SAP system is required to get a full end-to-end view of the supply chain. This can be split by regions, business units, company codes, etc. The Purchase-to-Pay data is flexible enough to support this with just a few minor steps required by following the example below.

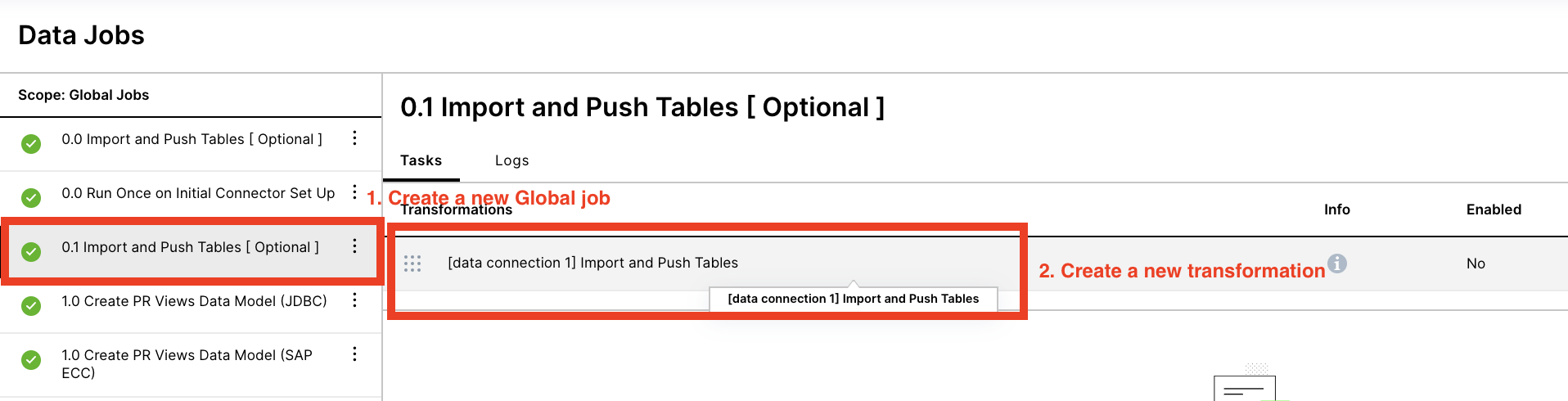

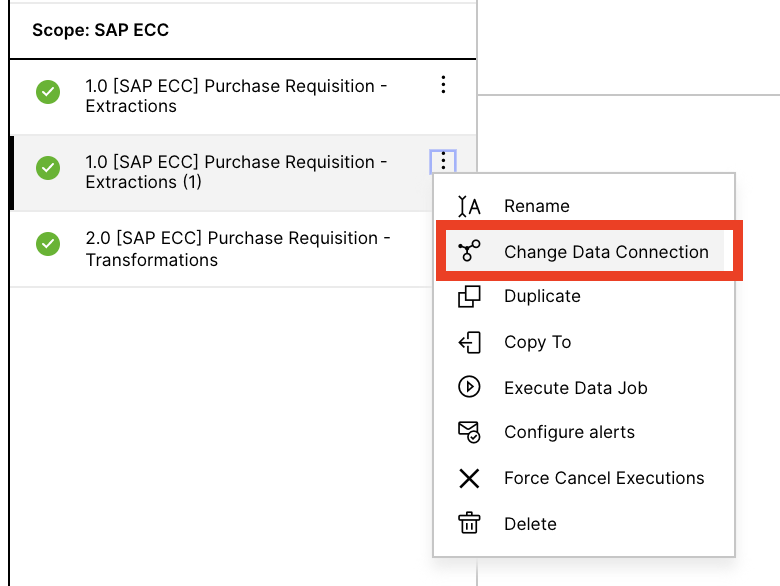

Step 1: Create a duplicate of the local data jobs by clicking on the three dots of the local job and then clicking duplicate. The duplication is important because it allows for the same template transformations to be shared across the different data jobs. Do not simply copy and paste the data transformations.

|

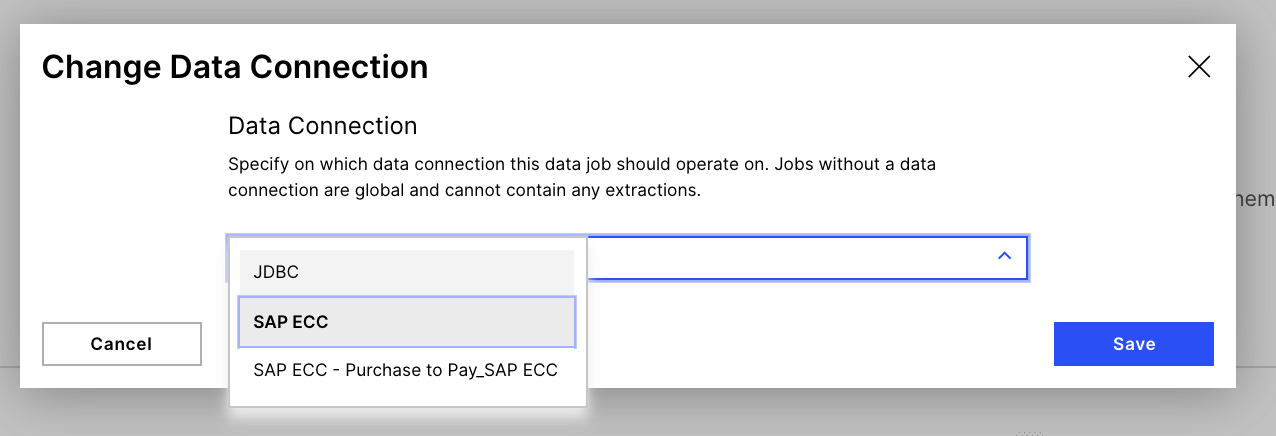

Step 2: Change the Data Connection of the newly duplicated data job to your other source system.

|

Step 3: Select your data connection (or connections) from the drop down

|

Step 4 (Optional): Change the name of the data job, simply removing the (1) which is creating during the duplicating process (skip this step if you do not mind the (1)).

Step 5: Run both (or as many as required) data jobs as usual, and remember to incorporate the new job into the Schedule.

Step 6: In the global P2P jobs (Job 1.0), update the different transformations to reference both local jobs and union them together. It is also advised to add the source system ID as another field in the table, removing any n:m data model join issues that might later occur (if the same material number is used in both source systems, this issue would occur). See below for an example of the _CEL_P2P_ACTIVITIES table. Do not forget to update the schema of the second source system.

DROP VIEW IF EXISTS "_CEL_P2P_ACTIVITIES";

CREATE VIEW "_CEL_P2P_ACTIVITIES" AS (

SELECT

'SOURCE_SYSTEM_ID_1' AS SOURCE_SYSTEM_ID

,"_CEL_P2P_ACTIVITIES".*

FROM <%=DATASOURCE:SAP_ECC%>."_CEL_P2P_ACTIVITIES" AS "_CEL_P2P_ACTIVITIES"

UNION ALL

SELECT

'SOURCE_SYSTEM_ID_2' AS SOURCE_SYSTEM_ID

,"_CEL_P2P_ACTIVITIES".*

FROM <%=DATASOURCE:SECOND_SOURCE_SYSTEM%>."_CEL_P2P_ACTIVITIES" AS "_CEL_P2P_ACTIVITIES"

);